Everything gets better when data moves closer to processing. Datomic can extensively cache its immutable data without concern for coordination or invalidation.

With the 'valcache' feature in today’s Datomic On-Prem release you can use your local SSDs as large, durable per-process caches, on both transactors and peers. Valcache can improve performance, reduce the read load on storage and stay hot across process restarts.

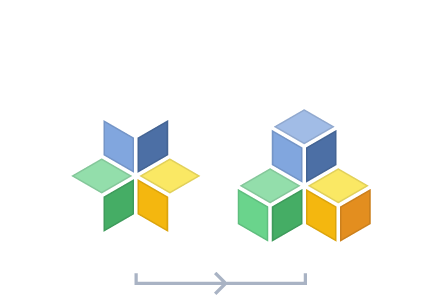

Today’s releases of Datomic Cloud and Datomic On-prem include two major new features: tuples and database predicates.

Most Datomic queries return tuples, but sometimes you just want maps. Today’s release of the Datomic Cloud client library adds return maps to Datomic datalog. For example, the following query uses the new :keys clause to request maps with :artist and :release keys:

Today’s releases of Datomic On-prem include a new feature: query-stats.

query-stats is a new feature that gives users visibility into the decisions made by the query engine while it processed your query.

Datomic Cloud is designed to be a complete solution for Clojure application development on AWS. In particular, you can implement web services as Datomic ions behind AWS API Gateway.

The latest release of Datomic Cloud adds HTTP Direct, which lets you connect an API Gateway endpoint directly to a Production Topology Compute Group.